SymtemV Api是相对来说更为传统的共享内存接口组,更适用于需要底层控制的传统IPC场景。

基本API

shmget 获取共享内存

使用shmget函数获取共享内存,函数原型如下:

1

2

3

4

5

6

7

8

|

int shmget(key_t key, size_t size, int shmflg);

|

shmget函数返回的是一个标识符,而不是可用的内存地址。

shmat 关联共享内存

使用shmat函数把共享内存关联到某个虚拟内存地址上,函数原型如下:

1

2

3

4

5

6

7

|

void *shmat(int shmid, const void *shmaddr, int shmflg);

|

shmdt 取消关联共享内存

当一个进程不需要共享内存的时候,就需要取消共享内存与虚拟内存地址的关联。取消关联共享内存通过 shmdt函数实现,原型如下:

1

2

3

4

5

|

int shmdt(const void *shmaddr);

|

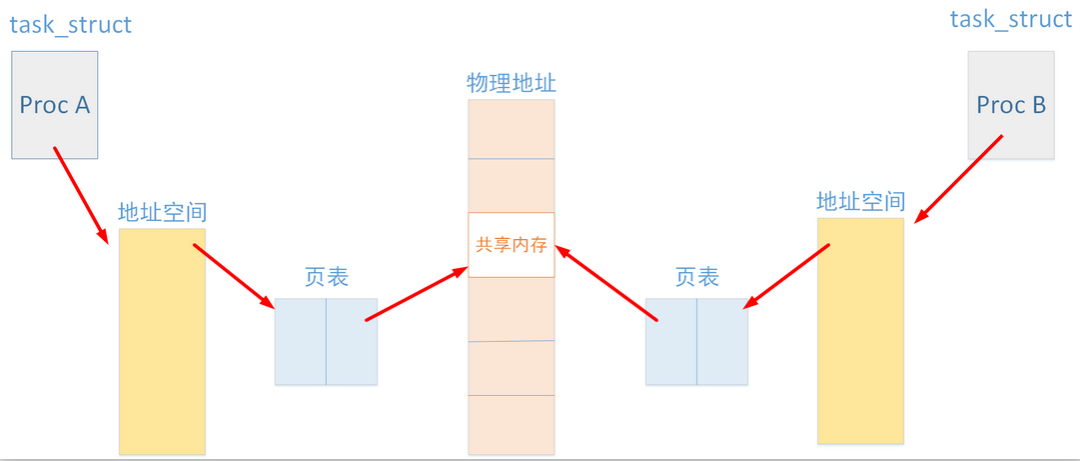

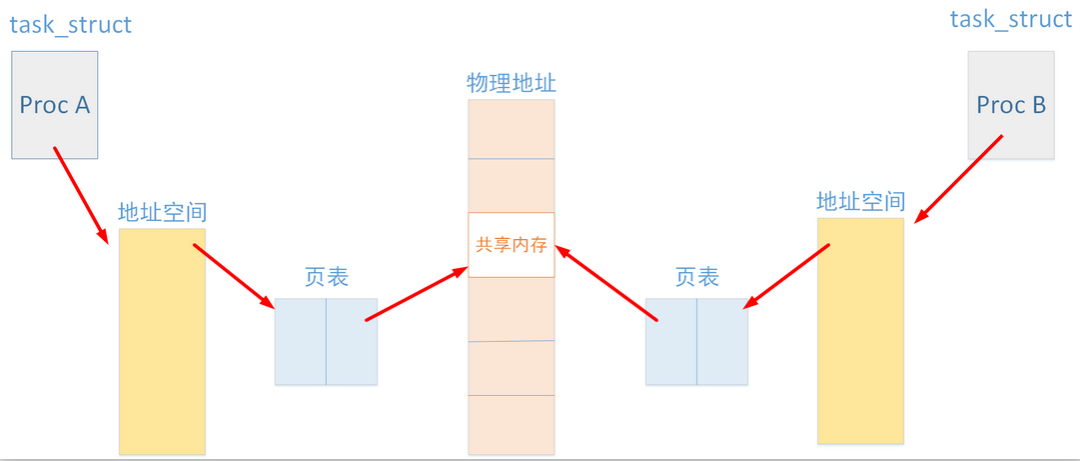

共享内存原理

概括而言,共享内存是通过将不同进程的虚拟内存地址映射到相同的物理内存地址来实现的。

在Linux 内核中,每个共享内存都由一个名为 shmid_kernel 的结构体来管理,而且Linux限制了系统最大能创建的共享内存为128个。

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

|

struct shmid_ds {

struct ipc_perm shm_perm;

int shm_segsz;

__kernel_time_t shm_atime;

__kernel_time_t shm_dtime;

__kernel_time_t shm_ctime;

__kernel_ipc_pid_t shm_cpid;

__kernel_ipc_pid_t shm_lpid;

unsigned short shm_nattch;

unsigned short shm_unused;

void *shm_unused2;

void *shm_unused3;

};

struct shmid_kernel

{

struct shmid_ds u;

unsigned long shm_npages;

pte_t *shm_pages;

struct vm_area_struct *attaches;

};

static struct shmid_kernel *shm_segs[SHMMNI];

|

shmget 函数实现

shmget 函数的实现比较简单,首先调用 findkey 函数查找值为 key 的共享内存是否已经被创建,findkey 函数返回共享内存在 shm_segs 数组 的索引。如果找到,那么直接返回共享内存的标识符即可。否则就调用 newseg 函数创建新的共享内存。newseg 函数的实现也比较简单,就是创建一个新的 shmid_kernel 结构体,然后设置其各个字段的值,并且保存到 shm_segs 数组 中。

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

| asmlinkage long sys_shmget (key_t key, int size, int shmflg)

{

struct shmid_kernel *shp;

int err, id = 0;

down(¤t->mm->mmap_sem);

spin_lock(&shm_lock);

if (size < 0 || size > shmmax)

{

err = -EINVAL;

} else if (key == IPC_PRIVATE)

{

err = newseg(key, shmflg, size);

} else if ((id = findkey (key)) == -1)

{

if (!(shmflg & IPC_CREAT))

err = -ENOENT;

else

err = newseg(key, shmflg, size);

} else if ((shmflg & IPC_CREAT) && (shmflg & IPC_EXCL))

{

err = -EEXIST;

} else {

shp = shm_segs[id];

if (shp->u.shm_perm.mode & SHM_DEST)

err = -EIDRM;

else if (size > shp->u.shm_segsz)

err = -EINVAL;

else if (ipcperms (&shp->u.shm_perm, shmflg))

err = -EACCES;

else

err = (int) shp->u.shm_perm.seq * SHMMNI + id;

}

spin_unlock(&shm_lock);

up(¤t->mm->mmap_sem);

return err;

}

|

shmat 函数实现

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

| asmlinkage long sys_shmat (int shmid, char *shmaddr, int shmflg, ulong *raddr)

{

struct shmid_kernel *shp;

struct vm_area_struct *shmd;

int err = -EINVAL;

unsigned int id;

unsigned long addr;

unsigned long len;

down(¤t->mm->mmap_sem);

spin_lock(&shm_lock);

if (shmid < 0)

goto out;

shp = shm_segs[id = (unsigned int) shmid % SHMMNI];

if (shp == IPC_UNUSED || shp == IPC_NOID)

goto out;

if (!(addr = (ulong) shmaddr)) {

if (shmflg & SHM_REMAP)

goto out;

err = -ENOMEM;

addr = 0;

again:

if (!(addr = get_unmapped_area(addr, shp->u.shm_segsz)))

goto out;

if(addr & (SHMLBA - 1))

{

addr = (addr + (SHMLBA - 1)) & ~(SHMLBA - 1);

goto again;

}

} else if (addr & (SHMLBA-1))

{

if (shmflg & SHM_RND)

addr &= ~(SHMLBA-1);

else

goto out;

}

spin_unlock(&shm_lock);

err = -ENOMEM;

shmd = kmem_cache_alloc(vm_area_cachep, SLAB_KERNEL);

spin_lock(&shm_lock);

if (!shmd)

goto out;

if ((shp != shm_segs[id]) || (shp->u.shm_perm.seq != (unsigned int) shmid / SHMMNI))

{

kmem_cache_free(vm_area_cachep, shmd);

err = -EIDRM;

goto out;

}

shmd->vm_private_data = shm_segs + id;

shmd->vm_start = addr;

shmd->vm_end = addr + shp->shm_npages * PAGE_SIZE;

shmd->vm_mm = current->mm;

shmd->vm_page_prot = (shmflg & SHM_RDONLY) ? PAGE_READONLY : PAGE_SHARED;

shmd->vm_flags = VM_SHM | VM_MAYSHARE | VM_SHARED

| VM_MAYREAD | VM_MAYEXEC | VM_READ | VM_EXEC

| ((shmflg & SHM_RDONLY) ? 0 : VM_MAYWRITE | VM_WRITE);

shmd->vm_file = NULL;

shmd->vm_offset = 0;

shmd->vm_ops = &shm_vm_ops;

shp->u.shm_nattch++;

spin_unlock(&shm_lock);

err = shm_map(shmd);

spin_lock(&shm_lock);

if (err)

goto failed_shm_map;

insert_attach(shp,shmd);

shp->u.shm_lpid = current->pid;

shp->u.shm_atime = CURRENT_TIME;

*raddr = addr;

err = 0;

out:

spin_unlock(&shm_lock);

up(¤t->mm->mmap_sem);

return err;

...

}

|

从代码可看出,shmat 函数只是申请了进程的虚拟内存空间,而共享内存的物理空间并没有申请。 事实上,当进程发生缺页异常的时候会调用 shm_nopage 函数来恢复进程的虚拟内存地址到物理内存地址的映射。

shm_nopage 函数实现

shm_nopage 函数是当发生内存缺页异常时被调用的,主要功能是当发生内存缺页时,申请新的物理内存页,并映射到共享内存中。由于使用共享内存时会映射到相同的物理内存页上,从而不同进程可以共用此块内存。

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

| static struct page * shm_nopage(struct vm_area_struct * shmd, unsigned long address, int no_share)

{

pte_t pte;

struct shmid_kernel *shp;

unsigned int idx;

struct page * page;

shp = *(struct shmid_kernel **) shmd->vm_private_data;

idx = (address - shmd->vm_start + shmd->vm_offset) >> PAGE_SHIFT;

spin_lock(&shm_lock);

again:

pte = shp->shm_pages[idx];

if (!pte_present(pte))

{

if (pte_none(pte)) {

spin_unlock(&shm_lock);

page = get_free_highpage(GFP_HIGHUSER);

if (!page)

goto oom;

clear_highpage(page);

spin_lock(&shm_lock);

if (pte_val(pte) != pte_val(shp->shm_pages[idx]))

goto changed;

} else {

...

}

shm_rss++;

pte = pte_mkdirty(mk_pte(page, PAGE_SHARED));

shp->shm_pages[idx] = pte;

} else

--current->maj_flt;

done:

get_page(pte_page(pte));

spin_unlock(&shm_lock);

current->min_flt++;

return pte_page(pte);

...

}

|